All Categories

Featured

Table of Contents

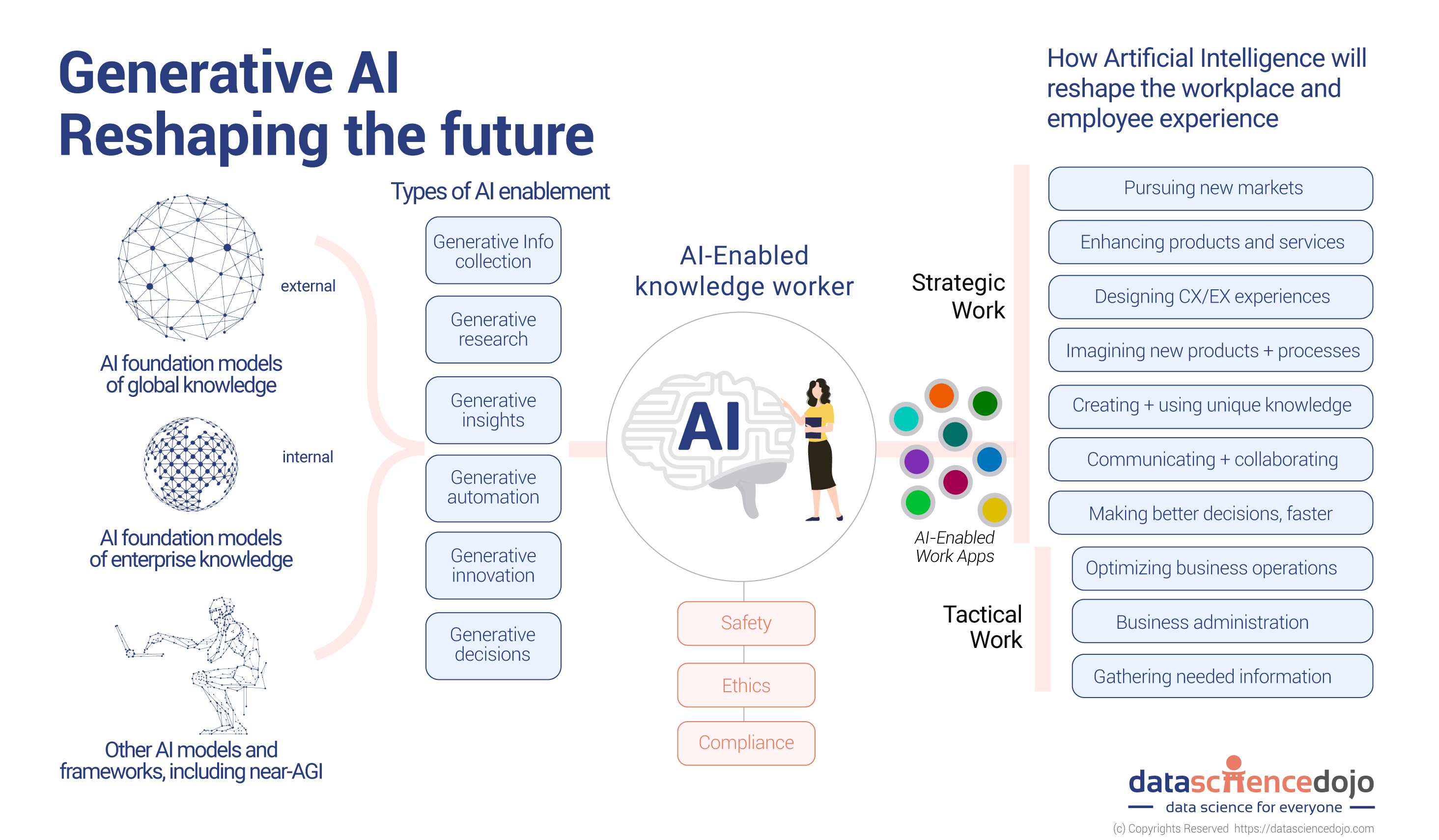

Generative AI has business applications beyond those covered by discriminative designs. Allow's see what general models there are to utilize for a wide variety of issues that get outstanding outcomes. Various formulas and relevant versions have actually been developed and educated to create brand-new, reasonable content from existing data. Some of the versions, each with distinct mechanisms and capacities, go to the forefront of improvements in areas such as picture generation, message translation, and information synthesis.

A generative adversarial network or GAN is an artificial intelligence structure that places both neural networks generator and discriminator versus each other, hence the "adversarial" part. The competition in between them is a zero-sum video game, where one agent's gain is one more representative's loss. GANs were developed by Jan Goodfellow and his coworkers at the College of Montreal in 2014.

The closer the result to 0, the more probable the result will certainly be fake. The other way around, numbers closer to 1 show a greater likelihood of the forecast being actual. Both a generator and a discriminator are frequently implemented as CNNs (Convolutional Neural Networks), especially when dealing with pictures. So, the adversarial nature of GANs exists in a video game theoretic situation in which the generator network need to compete against the adversary.

Can Ai Predict Weather?

Its adversary, the discriminator network, attempts to differentiate between samples drawn from the training information and those drawn from the generator. In this circumstance, there's always a champion and a loser. Whichever network stops working is updated while its rival stays the same. GANs will be considered effective when a generator creates a phony sample that is so convincing that it can trick a discriminator and humans.

Repeat. Explained in a 2017 Google paper, the transformer architecture is a maker discovering framework that is very efficient for NLP natural language handling tasks. It finds out to locate patterns in sequential information like created text or talked language. Based on the context, the design can forecast the following component of the collection, for instance, the next word in a sentence.

Ai-driven Customer Service

A vector represents the semantic qualities of a word, with comparable words having vectors that are close in worth. 6.5,6,18] Of course, these vectors are just illustrative; the real ones have several more dimensions.

At this phase, info about the setting of each token within a series is included in the kind of an additional vector, which is summed up with an input embedding. The outcome is a vector showing the word's first significance and position in the sentence. It's then fed to the transformer semantic network, which includes 2 blocks.

Mathematically, the connections between words in a phrase resemble ranges and angles between vectors in a multidimensional vector room. This device has the ability to identify subtle means also distant data components in a collection impact and rely on each various other. As an example, in the sentences I poured water from the pitcher right into the mug until it was complete and I put water from the pitcher into the cup till it was vacant, a self-attention system can identify the definition of it: In the former instance, the pronoun refers to the mug, in the last to the pitcher.

is utilized at the end to determine the likelihood of various outputs and select one of the most possible choice. Then the created output is appended to the input, and the entire process repeats itself. The diffusion design is a generative model that produces brand-new information, such as images or noises, by imitating the data on which it was educated

Think of the diffusion model as an artist-restorer who examined paintings by old masters and currently can paint their canvases in the same style. The diffusion model does about the same point in 3 primary stages.gradually introduces noise into the initial image until the result is simply a disorderly collection of pixels.

If we return to our example of the artist-restorer, straight diffusion is taken care of by time, covering the paint with a network of fractures, dirt, and oil; sometimes, the paint is reworked, including certain details and getting rid of others. is like examining a paint to realize the old master's original intent. AI startups. The design very carefully examines just how the added noise modifies the data

Machine Learning Trends

This understanding enables the model to effectively turn around the procedure later on. After finding out, this model can reconstruct the altered data by means of the process called. It starts from a noise sample and removes the blurs action by stepthe same means our artist gets rid of impurities and later paint layering.

Unrealized representations consist of the basic components of information, permitting the design to restore the original information from this encoded essence. If you change the DNA molecule simply a little bit, you get an entirely different organism.

What Are Ai-powered Robots?

Claim, the girl in the 2nd top right picture looks a little bit like Beyonc yet, at the very same time, we can see that it's not the pop singer. As the name recommends, generative AI transforms one type of image into another. There is an array of image-to-image translation variations. This task includes drawing out the style from a well-known painting and applying it to another image.

The result of using Stable Diffusion on The outcomes of all these programs are rather comparable. Some individuals note that, on standard, Midjourney draws a bit much more expressively, and Stable Diffusion follows the demand a lot more clearly at default setups. Researchers have actually also made use of GANs to produce synthesized speech from message input.

Ai For Remote Work

That stated, the music may transform according to the environment of the video game scene or depending on the strength of the customer's workout in the health club. Read our short article on to discover a lot more.

So, realistically, videos can additionally be created and converted in much the exact same means as images. While 2023 was noted by advancements in LLMs and a boom in picture generation technologies, 2024 has seen considerable improvements in video generation. At the start of 2024, OpenAI introduced a truly excellent text-to-video version called Sora. Sora is a diffusion-based model that produces video clip from static noise.

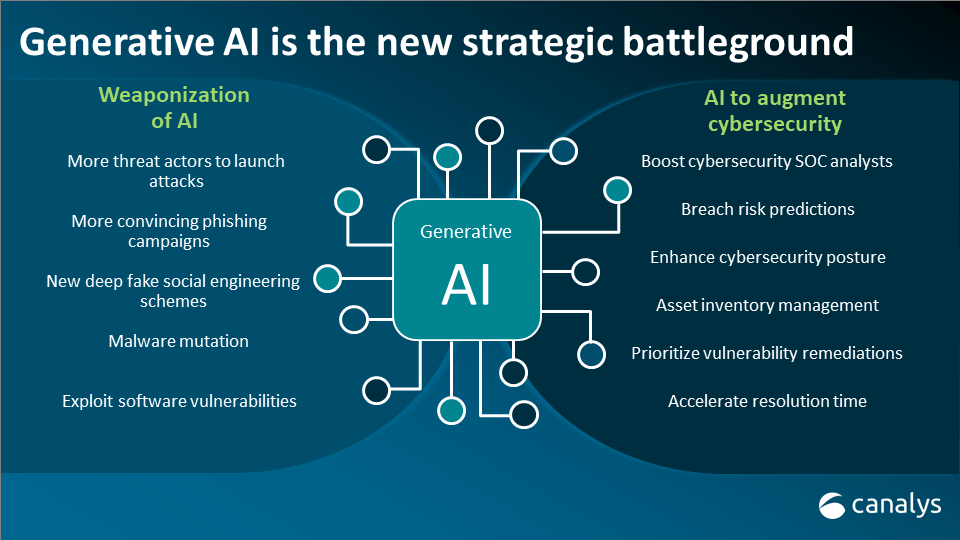

NVIDIA's Interactive AI Rendered Virtual WorldSuch artificially created data can help develop self-driving vehicles as they can make use of generated virtual globe training datasets for pedestrian discovery. Of program, generative AI is no exemption.

When we claim this, we do not indicate that tomorrow, machines will certainly climb against humankind and destroy the world. Allow's be truthful, we're rather excellent at it ourselves. However, because generative AI can self-learn, its actions is challenging to control. The results provided can commonly be much from what you expect.

That's why so many are executing vibrant and intelligent conversational AI designs that customers can communicate with through message or speech. In enhancement to consumer service, AI chatbots can supplement marketing efforts and support internal interactions.

Ai In Transportation

That's why so several are implementing vibrant and intelligent conversational AI versions that clients can engage with through message or speech. In addition to client service, AI chatbots can supplement advertising and marketing efforts and assistance inner communications.

Table of Contents

Latest Posts

Explainable Machine Learning

Cloud-based Ai

How Does Ai Process Speech-to-text?

More

Latest Posts

Explainable Machine Learning

Cloud-based Ai

How Does Ai Process Speech-to-text?